RV1126B AI Camera-end Cloud Collaboration Solution

During the implementation of AI vision projects, the question “Should algorithms run on camera devices or servers?” remains a critical challenge for developers. Traditional approaches present a binary dilemma: edge devices offer low latency but limited computing power, while cloud-based solutions provide robust processing capabilities but rely on network infrastructure and incur high costs. Rockchip’s RV1126B AI camera delivers an optimized solution through edge-cloud collaboration, enabling computation to occur at the most suitable location. This innovative approach has become a universal visual solution spanning from IPC network cameras to automotive applications.

The edge computing market is currently experiencing explosive growth, with the global market size expected to exceed $30 billion by 2025 at a compound annual growth rate of 35%. Understanding the edge-cloud collaborative architecture is not only critical for technology selection in AI vision projects but also forms the foundation for product definition.

Four Core Advantages of End-to-Cloud Collaboration

Unlike pure edge or cloud computing models, edge-cloud collaboration combines the strengths of both approaches to precisely address four core challenges: latency, bandwidth, reliability, and privacy. This is precisely why it has become the mainstream solution for AI vision applications.

- Low latency, optimized for real-time scenarios: Traditional cloud computing experiences data round-trip latency exceeding 100ms, failing to meet real-time requirements such as facial access control systems. Through edge-cloud collaboration, real-time tasks are retained at the edge. The RV1126B’s 3TOPS computing power enables local execution of facial/livestream detection, achieving end-to-end latency control below 50ms.

- Bandwidth optimization and transmission cost reduction: A single 1080p camera generates approximately 80GB of raw video daily, with full upload costs being prohibitively high. The end-to-cloud collaboration adopts a “front-end structuring + back-end analysis” model, uploading only structured information, resulting in over 90% reduction in bandwidth consumption in industrial scenarios.

- High reliability with continuous operation even during network outages: The device features built-in storage and computing capabilities, enabling independent operation when disconnected from cloud networks to prevent system paralysis caused by network failures.

- Protecting privacy and meeting compliance requirements: Sensitive information such as medical imaging and production data is restricted from being exported by regulations. Local processing on the device side inherently satisfies compliance requirements, with only anonymized analysis results being uploaded.

Introduction to Three Core Models of End-to-Cloud Collaboration

End-cloud collaboration isn’t a monolithic architecture, but rather adopts three distinct models tailored to business scenarios. These models span from basic task allocation to advanced model iteration, addressing application requirements at various levels.

- Task-level collaboration (most commonly used): The client side handles real-time inference, while the cloud side performs non-real-time analysis. For example, in smart parks, the RV1126B camera performs real-time facial detection and anomaly event detection, triggering local alarms while uploading structured data. The cloud server aggregates the data to complete cross-camera trajectory analysis.

- Model-level collaboration (new trend): Lightweight models are executed on the client side for basic recognition, while large models are deployed on the cloud side for deep analysis. In complex scenarios, client-side data is uploaded to the cloud, where large models perform secondary analysis to balance real-time performance and analytical depth.

- Training-Inferring Synergy (Data Loop Closure): The device performs inference and generates data, which is then transmitted back to the cloud for new model training. The updated model is subsequently deployed to the device via OTA, reducing the model iteration cycle from monthly to weekly.

Core Competencies of RV1126B Chip

The RV1126B is Rockchip’s flagship chip for edge vision computing. Its heterogeneous computing architecture enables efficient real-time processing on the edge, with each module performing specialized tasks without interference. The hardware specifications balance computational power, power consumption, and encoding capabilities to meet edge computing requirements.

Core heterogeneous computing architecture

- CPU: Responsible for control logic, with a quad-core clock speed of 1.5GHz

- NPU: Specialized in AI inference with integrated 3.0 TOPS computing power

- ISP: Handles image quality, with 12+8M ISP 2.0 supporting 3-frame HDR

- VPU: Handles video encoding while balancing high resolution and high frame rates

Key hardware specifications

- Coding capability: 4K H.264/H.265 at 45fps + 1080p sub-stream

- Power consumption performance: Typical power consumption ranges from 3-5W, with standby power as low as 1mW

- Computing power: 3TOPS of total computing power, meeting lightweight AI inference requirements on edge devices

Clear division of labor between cloud and edge

The essence of edge-cloud collaboration lies in ‘division of labor.’ The RV1126B solution clearly delineates responsibilities between edge devices and cloud platforms, preventing computational resource waste and redundancy while maximizing operational efficiency.

Core responsibilities at the edge

- Real-time video processing: 4-channel 1080P@45fps parallel hardware encoding without CPU resource usage.

- Lightweight AI inference: Face detection takes approximately 23ms, liveness detection around 15ms, with YOLOv3 achieving 25 FPS on NPU.

- Structured data output: Upload only structured information such as events and features, reducing bandwidth costs by over 70%.

Core responsibilities of cloud-side operations

- Cross-camera trajectory tracking: Aggregating multi-device detection results to correlate and reconstruct the complete target motion trajectory.

- Deep analysis of large models: Tasks such as scene understanding and complex behavior judgment are performed by cloud-side GPU clusters.

- Data Aggregation and Mining: Generating Value Outcomes Such as Passenger Flow Heatmaps and Behavioral Pattern Analysis Based on Full-Dataset Data.

Flexible deployment mode

The RV1126B Edge-Cloud Collaboration Solution offers three flexible deployment modes, catering to both new deployments and legacy system upgrades, significantly reducing implementation costs for AI vision projects.

- Single-node AI camera: Designed for standalone applications including IPC systems, facial recognition turnstiles, and in-vehicle DVRs. It independently handles data collection, analysis, and reporting, with 3TB computing capacity to meet most basic AI vision requirements.

- Cluster server: Suitable for multi-channel concurrent scenarios such as industrial parks and shopping malls, capable of supporting up to 72 RV1126B core boards while processing 288 channels of 1080p video streams. Ordinary cameras can be upgraded to AI cameras after integration, reducing upgrade costs by 40%-60%.

- Edge computing box: Specifically designed for retrofitting existing infrastructure. By integrating traditional cameras, it upgrades legacy systems to intelligent analytics platforms, reducing renovation costs by 70%.

Multi-case experimental results

The RV1126B edge-cloud collaborative solution has achieved mature deployment across three key applications—IPC network cameras, facial recognition turnstiles, and vehicle-mounted cameras—thanks to its balanced performance in computing power, power consumption, and compatibility. Each scenario has been optimized with tailored technical enhancements.

1.IPC Network Camera (Basic Solution)

- Access method: Ethernet connection, RTSP streaming to the cloud, outputting 4K main stream and 1080p sub stream

- Core advantages: YOLOv3 achieves 25 FPS with dynamic bitrate optimization saving 50% of bitrate; directly replaces standard IPC main controller while maintaining compatibility with existing camera modules. Quick video pipeline setup is enabled through RKMedia framework integration.

Facial recognition turnstile (most mature application scenario)

- Core solution: Binocular system (RGB color image acquisition + IR infrared image acquisition) combined with liveness detection for attack prevention.

- Measured data: Face detection 23ms + binocular live detection 15ms + feature extraction 25ms, supports a database of 100,000 faces, recognition accuracy>99.7%.

- Hardware specifications: Dual cameras with 2 million pixels each, 4.3mm lens (70-degree field of view), recognition range approximately 0.8 meters.

Vehicle-mounted camera / Driving recorder

- Key features: 6-DOF digital image stabilization (eliminating driving vibrations), multi-source fusion (visible light + infrared thermal imaging), and hardware-level national cryptographic standard SM2/SM3/SM4 security support

- Industrial-grade compatibility: Standard operating temperature range-20°C to 60°C; optional industrial-grade range-40°C to 85°C, suitable for complex automotive environments.

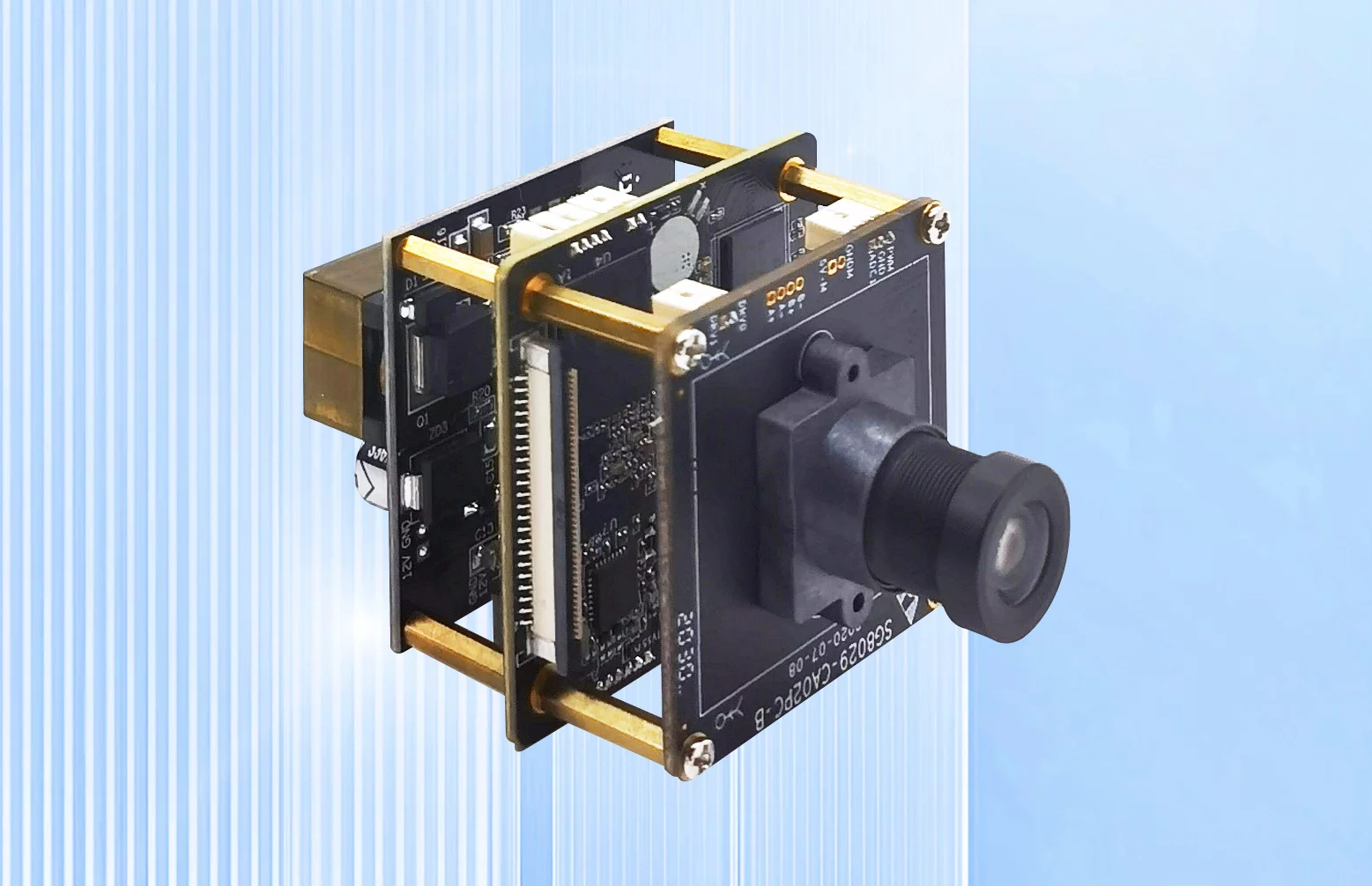

Developer Support

The Smartgiant NeoCAM AI offers a customizable AI camera hardware development platform featuring Rockchip RV1126B processor solution. It supports Sony IMX series sensors with POE power supply and data transmission, adopting a 38-board standard architecture for rapid on-demand casing customization. The platform covers the entire lifecycle from development to validation and mass production. Its hardware capabilities are robust.

- AI camera modules based on Rockchip RV1126

- Quad core ARM Cortex A7 processor

- Support NNIE performance up to 3.0Tops

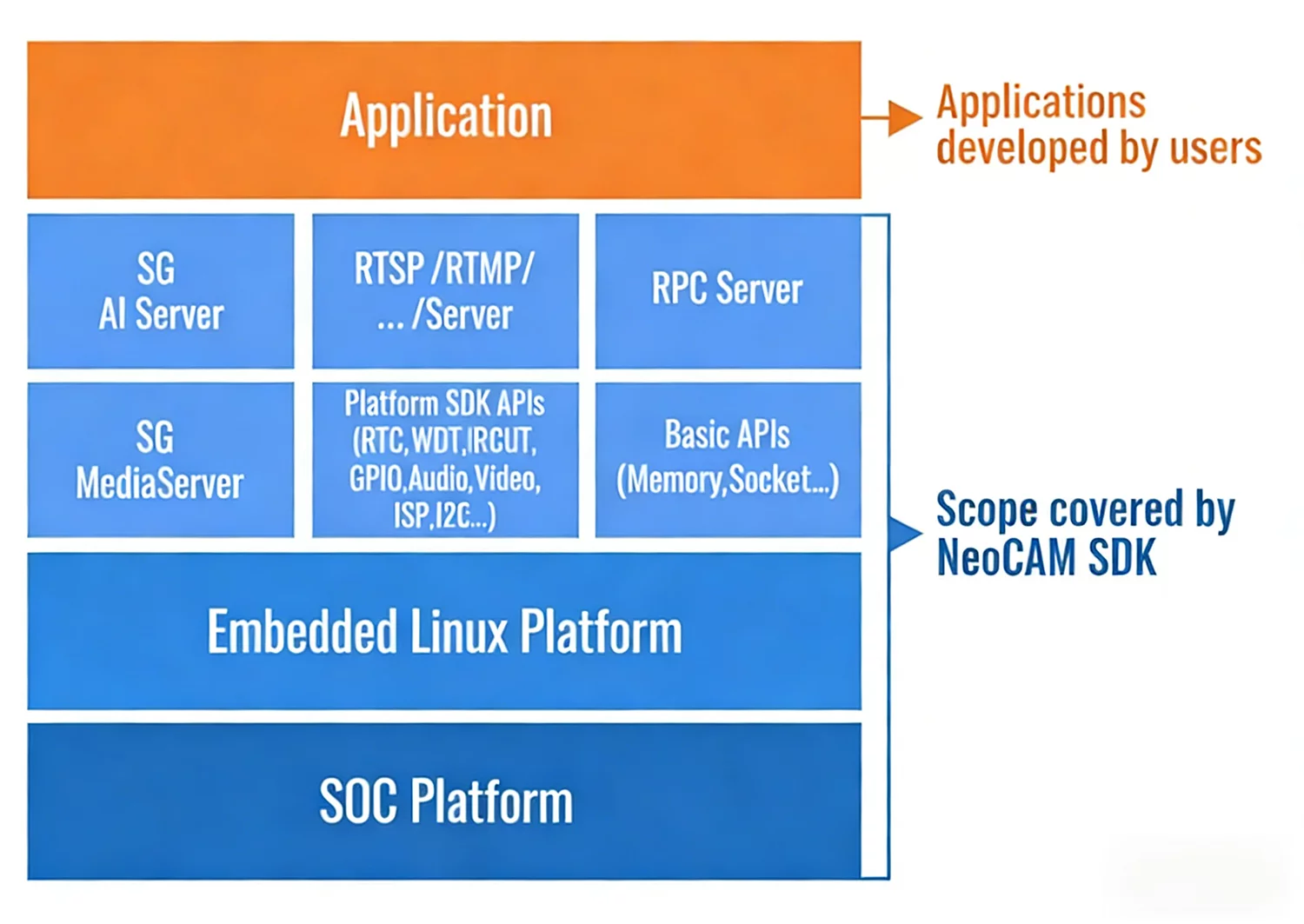

Software aspects,the Smartgiant NeoCAM AI camera comes equipped with a comprehensive SDK suite including system components, drivers, image processing modules, and AI application interfaces, providing developers with robust tools and resources. The platform supports multiple programming languages such as Python, C, and C++, enabling extensive development and preliminary research while significantly reducing development complexity. This accelerates the implementation cycle from AI algorithm development to hardware deployment, allowing customers to conduct secondary development tailored to specific needs, integrate proprietary algorithms, and achieve customized functionalities. The NeoCAM SDK is designed to streamline AI development processes by encapsulating core functionalities like hardware drivers, image processing, and media services into standardized APIs through a layered architecture. Developers can focus on AI model development and business logic implementation without requiring in-depth understanding of complex underlying technical details.

- Integrated basic camera platform, eliminating the need to focus on systems, drivers, and image management.

- Efficient AI application interface, fast and easy-to-use AI model deployment and verification.

- Multilingual support for Python/C/C++ development languages.

Summary

The core of RV1126B’s edge-cloud collaboration lies in precise computing allocation: real-time tasks remain on the edge while high-computational-load operations are handled by the cloud. Bandwidth-sensitive data is retained locally, while high-value data is uploaded in structured formats. This solution breaks the “edge-cloud binary choice” dilemma, offering balanced advantages in computing power, energy efficiency, and ecosystem integration. It serves as a universal AI vision solution spanning from IPC to automotive applications, delivering tangible results across diverse scenarios including security, industrial, commercial, and catering sectors.

Future smart cameras will no longer be mere imaging devices, but edge nodes capable of sensing, understanding, and making decisions. For AI vision developers, the core selection criteria will evolve from “where algorithms run” to “which tasks are performed on the edge, which are handled in the cloud, and how to achieve more efficient collaboration.” Edge-cloud coordination is precisely the key to this upgrade.

Contact Us

Smartgiant Technology 1800 Wyatt Dr, Unit 3, Santa Clara, CA 95054.

Email: info@smartgiant.com

Contact Us

Smartgiant Technology 1800 Wyatt Dr, Unit 3, Santa Clara, CA 95054.

Email: info@smartgiant.com